A Recipe for Spaghetti Code in LabVIEW (Part 1)

Editor's note (December 2025): We originally published this in 2019, and it remains one of our most-read posts six years later. The principles haven't changed—if anything, they've become more critical as LabVIEW projects grow in complexity.

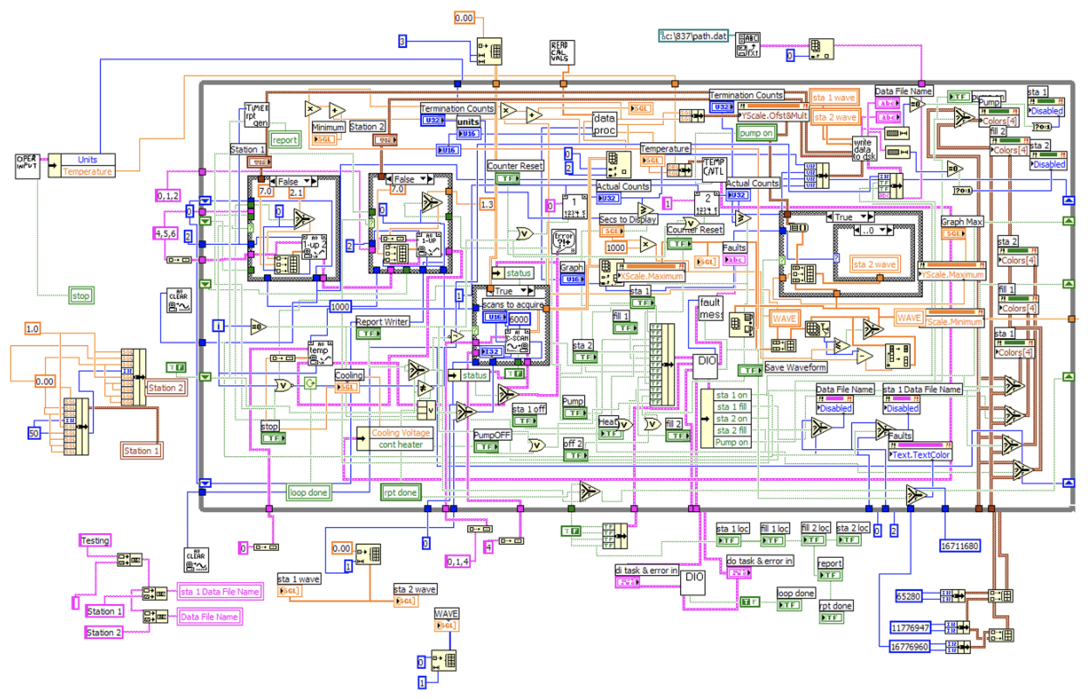

Have you ever wondered how a simple LabVIEW VI can turn into a tangled mess of wires that barely fits on your screen? Have you ever tried cleaning up that code only to find that it has lots of "quirks" in it? Then, you're not alone...

This has happened to just about every engineer who has ever programmed in LabVIEW, including me! Fortunately, it doesn't have to end up this way. There are a lot of simple programming practices and tools that can help.

Spoiler, the solution is not to buy a 49" ultra widescreen monitor (although, that would be cool).

So, how does a simple LabVIEW VI turn into a tangled mess spaghetti code?

Here's how it typically happens...

You're in the lab or out on the factory floor, and you need to get some work done.

Maybe you have to acquire some data from an instrument and plot it to a graph. So, you create a new VI and drop your instrument's Acquire.vi onto the block diagram and wire it up to a graph.

You need to add some more features to the code (obviously).

So, you add a few buttons and sliders to control some other hardware -- you need to be able to open a valve, or, perhaps, move a motor. So, you wire the button up to the valve's Open-Close.vi and the slider up to the motor's Move.vi

It's working pretty well, so now it needs to work like a "real" application.

You wrap all the code inside big While Loop so that it will keep going, continuously. You add some initialization and some shutdown code. Congratulations, you've got a "real" application!

Of course, you need more features and functionality.

Your basic system is running well, so now you write a simple control algorithm or data processing routine that executes while the main loop is running -- you want to see how these controls affects the acquired data. Your experiment is up and running!

And, even more functionality is needed.

Amazingly, things are working very well, and you continue to bolt on new functionality to your application:

- You save data to a file...

- You load settings from a configuration...

- And then some more features...

- And some more...

And then, maybe all at once or gradually over time, you realize what has happened...

You've created a monster!

Your block diagram has grown too big to fit on your screen -- in fact it wouldn't fit onto two screens (or maybe even four). You realize that the wires of your LabVIEW code are starting to resemble a plate of rainbow spaghetti. You wonder, how do I fix this?

How Does Your Code Measure Up?

Score your codebase against the 22-point checklist JKI engineers use on professional projects. Covers architecture, code reuse, data management, documentation, and maintainability.

Get the Free Checklist →So, you try to clean up the code.

You create local variables to clean up the wire. However, now it seems that this has introduced odd behaviors (due to race conditions) with the executing code, because the execution flow (of data) has changed. To make matters worse, the code is impossible to debug, because it doesn't fit on one screen and wires are going every which way.

But, maybe you're in too deep.

You finally realize (or accept) that it's time to write some "real code". You've heard that "software engineering" is a good thing. You've heard other LabVIEW developers talk about "frameworks" and "templates", but you haven't had time to learn about all that stuff. You've got a system to design and control. You've got data to collect and experiments to run.

What do you do now?

If this story sounds familiar, you're not alone. This is a typical progression of how LabVIEW makes it easy for engineers to write code quickly, that's hard to maintain. However, it's not LabVIEW's fault, nor is it the fault of the engineer. This happened because good guides were needed, within which to develop the application.

🛠️ Ready for the Solution?

In Part 2, we cover the 3 steps to avoiding spaghetti code—plus the template our engineers use on NASA, Ford, and many organizations.

Read Part 2 →PS: I'm interested to hear about how *you* have solved these challenges (and whether my description of the problem rings true). You can message me on twitter @jimkring.

Enjoyed the article? Leave us a comment